Introduction

After a ton of work, over the next week or two we will be introducing JWT tokens for authentication in ApiOpenStudio.

This will:

- Significantly increase the speed of resource requests.

- Make individual transactions stateless.

- Maintain the granular access rights to resources, based on the user’s access rights, the resource’s holding account & application, and of course the resource itself.

- Ensure viability and ease for enterprise clients to use 3rd party authorisation services.

This article will take a look into the JWT specification, current practices and how it is used in ApiOpenStudio.

Rationale

While trying to optimise the authentication DB queries that are performed before the resource was processed, we came to the realisation that the queries were quite long and involved the joining of multiple tables and had several sub-queries so that the query could take into account our extensive range of access rights, like Administrators, Account managers, Applications Managers, Developers and Consumers. Although this would obviously be faster in a production environment, this was causing 1-2 seconds additional processing time to calls in development environments…

Because this had to be calculated every time a resource was called!

The solution was to introduce stateless authorisation, in the form of JWT tokens.

JWT is rapidly becoming the industry standard for authentication and JWT tokens have what are called Claims, which are individual name/value pairs within the body of the token and the token itself is encrypted and secure, which means that sensitive data can be securely included in the token and thus means that the user’s roles and permissions can be included as a claim and only need to be fetched once (during the JWT token GET call).

In addition, JWT tokens have a TTL, which means that we do not need to store a bearer token in the DB against each user (use that to fetch the user that each time a request is received) – because if the token is valid, then there is no need to fetch the user and check the bearer token TTL.

Here are some scenarios where JSON Web Tokens are useful:

Authorisation: This is the most common scenario for using JWT. Once the user is logged in, each subsequent request will include the JWT, allowing the user to access routes, services, and resources that are permitted with that token. Single Sign On is a feature that widely uses JWT nowadays, because of its small overhead and its ability to be easily used across different domains.

Information Exchange: JSON Web Tokens are a good way of securely transmitting information between parties. Because JWTs can be signed—for example, using public/private key pairs—you can be sure the senders are who they say they are. Additionally, as the signature is calculated using the header and the payload, you can also verify that the content hasn’t been tampered with.

auth0.com, JSON Web Token Introduction – jwt.io, viewed 1 September 2021, https://jwt.io/introduction.

How does JWT work?

Pronunciation

Before we start, for a bit of fun I’d like to set the record straight. I’m not one of those boring “absolutists” who insist that GIF should be pronounced “JIF”, even though the original creator of the tech obviously could not spell when he declared that was the pronunciation. But many people are driving me bananas, by mispronouncing JWT: just pronounce it as “JOT”, it’s also easier to say than the most common variant “Jay-Dubbyah-Tee” (this is not a relative to a former US president).

The suggested pronunciation of JWT is the same as the English word “jot”.

Jones M, Microsoft, Bradley J, Ping Identity, Sakimura N, NRI 2020, JSON Web Token (JWT), Internet Engineering Task Force (IETF),viewed 1 September 2021, https://datatracker.ietf.org/doc/html/rfc7519#section-1.

JWT overview

Now for the technical stuff…

JWTs represent a set of claims as a JSON object that is encoded in a

JWS and/or JWE structure. This JSON object is the JWT Claims Set.

As per Section 4 of RFC 7159 [RFC7159], the JSON object consists of

zero or more name/value pairs (or members), where the names are

strings and the values are arbitrary JSON values. These members are

the claims represented by the JWT. This JSON object MAY contain

whitespace and/or line breaks before or after any JSON values or

structural characters, in accordance with Section 2 of RFC 7159

[RFC7159].

The member names within the JWT Claims Set are referred to as Claim

Names. The corresponding values are referred to as Claim Values.

The contents of the JOSE Header describe the cryptographic operations

applied to the JWT Claims Set. If the JOSE Header is for a JWS, the

JWT is represented as a JWS and the claims are digitally signed or

MACed, with the JWT Claims Set being the JWS Payload. If the JOSE

Header is for a JWE, the JWT is represented as a JWE and the claims

are encrypted, with the JWT Claims Set being the plaintext encrypted

by the JWE. A JWT may be enclosed in another JWE or JWS structure to

create a Nested JWT, enabling nested signing and encryption to be

performed.

A JWT is represented as a sequence of URL-safe parts separated by

period (‘.’) characters. Each part contains a base64url-encoded

value. The number of parts in the JWT is dependent upon the

representation of the resulting JWS using the JWS Compact

Serialization or JWE using the JWE Compact Serialization.

Jones M, Microsoft, Bradley J, Ping Identity, Sakimura N, NRI 2020, JSON Web Token (JWT), Internet Engineering Task Force (IETF),viewed 1 September 2021, https://datatracker.ietf.org/doc/html/rfc7519#section-3

What does this mean?

A token is comprised of 3 parts, the header, payload and signature.

The header of the token defines the cryptography applied to the payload, and thepayload is a JSON structure, that is encoded in JWS (Base64 encoded) or JWE (encrypted).

JWS is less secure since it is only a Base64 encoded JSON, and this means it is not suitable in our case. Because the token will carry authentication data for access to the resource and Base64 encoding is different to encryption – it is relatively trivial to decode.

JWE is more suitable to our case, because the JSON payload is encrypted (as defined in the header), and the JWT token keys are stored securely on the on the ApiOpenStudio server, so only the API server can decrypt the body.

There are multiple encryption standards available, and in our case we are using the fantastic lcobucci-jwt library, which is sponsored by one of the leading Authorisation services: Auth0. This provides support for many, many symmetric and asymmetric algorithms.

We have not implemented symmetric algorithms (these are less secure and are the same key for encryption and decryption), so with asymmetric algorithms, the public key can be used by any clients, and the public/private keys are stored in a secure location on the ApiOpenStudio server.

JWT token structure

In its compact form, JSON Web Tokens consist of three parts separated by dots (.), which are:

Therefore, a JWT typically looks like the following.

xxxxx.yyyyy.zzzzz

Header

The header typically consists of two parts: the type of the token, which is JWT, and the signing algorithm being used, such as HMAC SHA256 or RSA.

For example:

{

"alg": "RSA256",

"typ": "JWT"

}

Then, this JSON is Base64Url encoded to form the first part of the JWT.

Payload

The second part of the token is the payload, which contains the claims. There are three types of claims: registered, public, and private claims.

- Registered claims: These are a set of predefined claims which are not mandatory but recommended, to provide a set of useful, interoperable claims. Some of them are: iss (issuer), exp (expiration time), sub (subject), aud (audience). Notice that the claim names are only three characters long as JWT is meant to be compact.

- Public claims: These can be defined at will by those using JWTs. But to avoid collisions they should be defined in the IANA JSON Web Token Registry or be defined as a URI that contains a collision resistant namespace.

- Private claims: These are the custom claims created to share information between parties that agree on using them and are neither registered or public claims.

An example payload could be:

{

"iss": "my.apiopenstudio.com",

"sub": "1234567890",

"name": "John Dory",

"admin": true

}

The payload is then Base64Url encoded to form the second part of the JSON Web Token.

Signature

To create the signature part you have to take the encoded header, the encoded payload, a secret, the algorithm specified in the header, and sign that.

The signature is used to verify the message wasn’t changed along the way, and in the case of tokens signed with a private key, it can also verify that the sender of the JWT is who it says it is.

Using JWT tokens

The finalxxxxx.yyyyy.zzzzz token is sent in the request as a bearer token in the request header, e.g.:

Authorization: Bearer <token>

When the request is received by the API server, it will first confirm that the token is valid, checking it can be decrypted, the issuer, expiry date and mandatory claims.

If this all passes, the processing can continue, otherwise a 401 error response is sent and the client will need to generate a new token, using the provided core token request (auth/token) and resend the request with the token that was received.

From a processes POV, this is exactly the same as before. However, as mentioned, we are using asymmetric encoding, so we can include data in the token payload that means that ApiOpenStudio does not need too fetch user data in order to validate the user’s permissions against the resource. That only needs to be fetched once – when the token is generated.

Minor issue (caveat)

The original authorisation tokens were stateful, i.e. the token was stored against the user, along with the TTL for the token. This meant that if a user was banned, deleted or made inactive, they would instantly not be able to make any further API requests, due to either the users not being present/active anymore, or the token was no longer valid.

JWT tokens are stateless. This means that if a user has a valid token, they can still use it until it expires regardless of whether they are made inactive or deleted (they would only be prevented from fetching a fresh token).

This can be mitigated by setting a global jwt_life of less than 1 hour (the default), however this needs to be balanced against the increase in requests, since a lower token TTL will lead to more requests due to tokens frequently passing their expiry date more frequently.

Another mitigation for extreme cases can be to add the IP address of the client to the blacklist – this will prevent all future calls from that location, immediately.

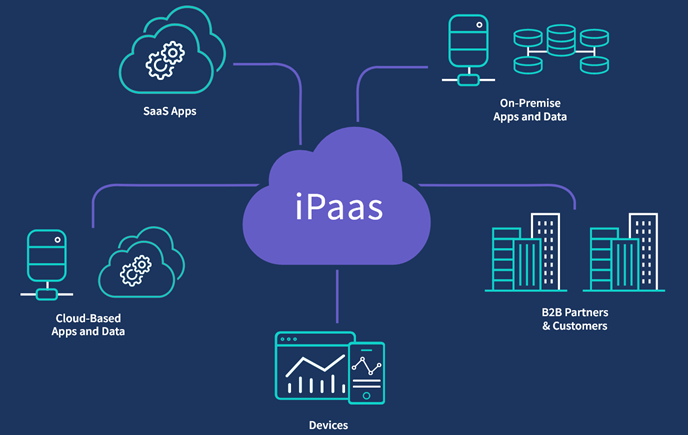

3rd party authorisation integration

Because the token requires certain client data to be present, the user details and roles will need to be accessible by the authorisation provider, so that they can generate a valid body. Thankfully, most reputable providers allow you to upload these details to your account with them, and also provide ways to ensure that these details are always current and up to date.

You will need to ensure that the following mandatory claims:

- iss – JWT issuer (your auth provider)

- aud – permitted for (your api)

- iat – JWT issued time

- exp – JWT expiry time

The following ctsom claims are also included

- uid – user ID

- roles – complete list of roles and accounts/applications that the user is associated with

The roles object

This is in the JSON object format of:

roles: [

{

"role_name": <role_machine_name>,

"accid": <account_id>,

"appid": <application_id>

}

]

For example:

roles: [

{

"role_name": "administrator",

"accid": null,

"appid": null

},

{

"role_name": "consumer",

"accid": 34,

"appid": 5

},

{

"role_name": "developer",

"accid": 34,

"appid": 5

},

....

]

Note that:

- “administrator” does not require accid or appid

- “account_manager” does not require appid

Summary

We’re really excited to be implementing this technology, seeing the decrease in resource processing time, increasing the security of the API, and making it even easier for enterprise scale users to implement ApiOpenStudio on a large scale.

I’ll be honest with you too, it was really good fun to implement, and we totally got a total nerd-on, doing the research and coding for this!